Understanding Goroutines & The Go Scheduler (GMP Model)

Introduction to the Go Scheduler and the GMP Model

Go’s concurrency model is one of the main reasons behind its popularity for building scalable systems. While launching a goroutine looks trivial using the go keyword, the internal mechanics behind it are powerful and carefully designed.

This blog explains goroutines from the ground up and then dives deep into how Go schedules them using the GMP scheduler model. Everything here focuses on how things actually work.

What is Goroutine?

A goroutine is a lightweight unit of execution managed entirely by the Go runtime, not by the operating system.

Key characteristics of goroutines:

Lightweight compared to OS threads

Dynamically growing stack

User-space threads

Exist within the same address space

Managed by the Go runtime scheduler

Multiplexed onto OS threads

Initial stack size is ~2 KB

Communicate via channels or shared memory

Go promotes a strong concurrency principle:

Do not communicate by sharing memory; instead, share memory by communicating.

This philosophy is central to Go’s design and is the reason channels are preferred over locks in many cases.

Goroutines vs OS Threads

Goroutines are not OS threads.

OS threads are heavyweight, expensive to create, and managed by the OS

Goroutines are lightweight, cheap to create, and managed by Go

A Go program can run thousands or even millions of goroutines, which would be impossible with OS threads alone.

Goroutines Are Managed by the Go Runtime

Some important points:

Goroutines are scheduled in user space

The OS does not know about goroutines

The Go runtime maps goroutines onto OS threads

Multiple goroutines can run on a single OS thread

Multiple OS threads can exist inside one Go process

This mapping is handled using the GMP scheduler model.

The GMP Scheduler Model

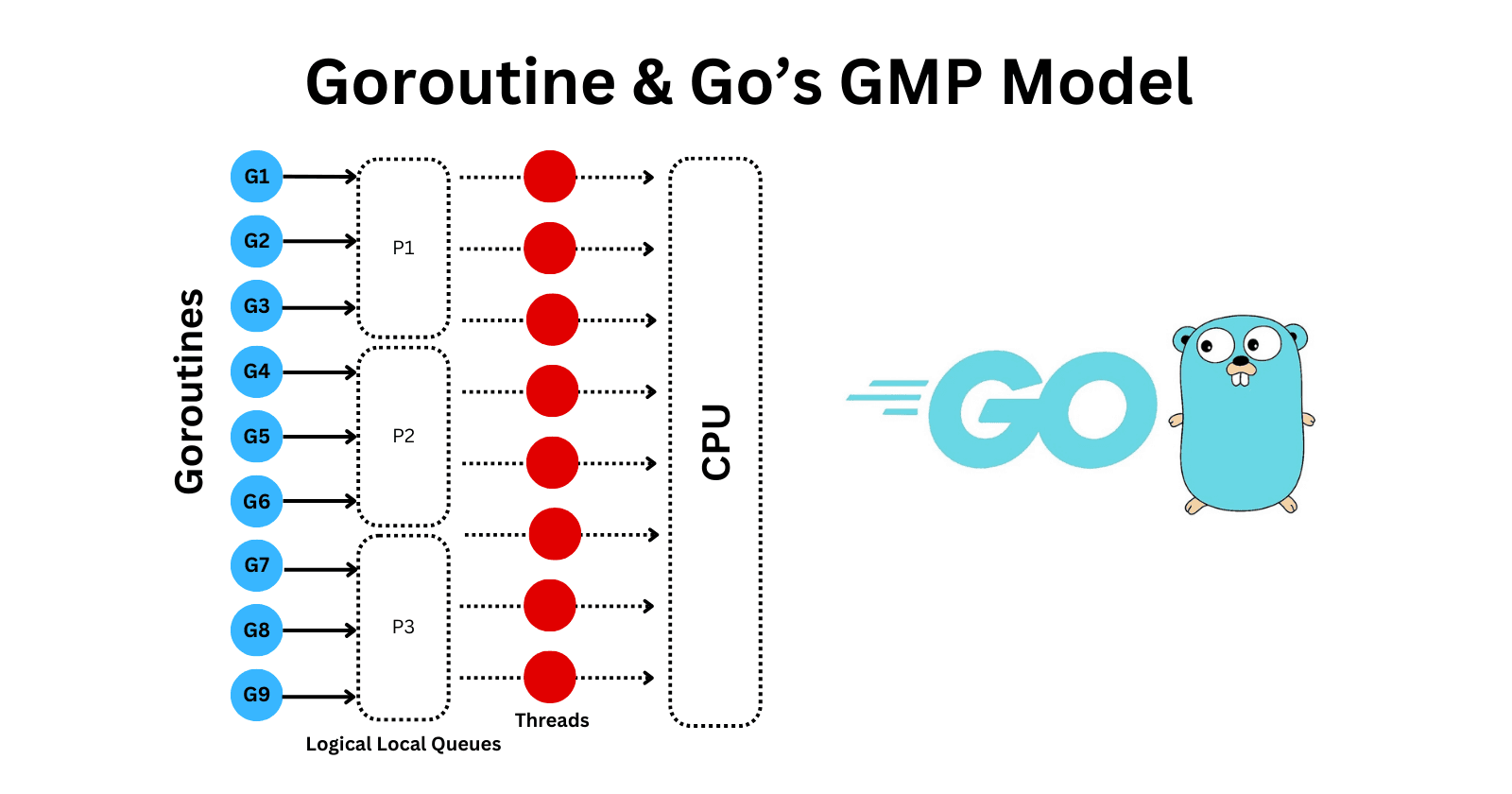

The Go scheduler internally uses the GMP model:

G : Goroutine

M : Machine (OS Thread)

P : Processor (Logical scheduling context)

This model defines how goroutines are executed efficiently across CPU cores.

G : Goroutine

A G represents a goroutine.

It contains:

Stack

Program Counter

CPU registers

Metadata (state, ID, etc.)

A goroutine cannot execute by itself. It must be:

Assigned to a P (processor)

Executed by an M (machine)

M : Machine (OS Thread)

Represents an OS thread

Created by the Go runtime

Executes Go code

An M must acquire a P to run goroutines

P : Processor (Logical Execution Context)

A P represents execution capacity.

Each P :

Holds a local run queue of goroutines, when a goroutine is created, it is added to the local queue of the currently running P, initially, many goroutines may accumulate on a single P.

Contains scheduler state

Controls parallel execution

Key rule:

Parallelism is limited by the number of P, not by goroutines or OS threads.

The number of P is controlled by: GOMAXPROCS, by default, it equals the number of logical CPUs.

Important detail:

The number of OS threads (M) can be greater than the number of processors (P).

Why?

OS threads can block on system calls or I/O

Go creates extra threads to avoid stalling execution

How Goroutines Are Executed (High-Level Flow)

Many Goroutines (G) are created by the program

Each newly created goroutine is placed into the local run queue of a Processor (P)

Each Processor (P) manages its own queue of runnable goroutines

An OS thread (M) acquires a Processor (P)

The OS thread (M) picks a goroutine (G) from the P’s local run queue

The OS thread (M) executes the goroutine (G)

The goroutine runs on the CPU until it blocks, completes, or is preempted

Global Run Queue

Go also maintains a global run queue.

Purpose:

Ensure fairness

Handle load balancing

Support scheduling when local queues are empty or unsuitable

The scheduler periodically pulls goroutines from the global queue to avoid starvation.

Work Stealing

If a P runs out of runnable goroutines:

It steals half of the runnable goroutines

From another P’s local run queue

Keeps CPU cores busy

Maximizes parallelism

This mechanism is called work stealing and is crucial for Go’s scalability.

What Happens When a Goroutine Blocks?

When a goroutine:

Performs a blocking system call

Waits on I/O

Sleeps

Waits on a channel

Then:

The M releases its P

Another M can acquire that P

Execution continues without blocking the entire scheduler

This design ensures that blocking operations do not reduce parallelism.

M and P Relationship

Important observations:

An OS thread (M) holds a P only while executing Go code

Blocking, preemption, or scheduling can cause it to release the P

Each OS thread can execute many goroutines over time

Only one goroutine runs at a time per P

Goroutine Creation Timing

A very common misconception:

A goroutine is enqueued when the go statement executes, not when the function is declared.

go doWork()

At this moment:

A new G is created

It is placed into a P's run queue

Scheduler decides when it runs

Program Startup Flow

When a Go program starts:

The runtime creates multiple P (based on GOMAXPROCS)

Each P gets its own local run queue

The initial OS thread acquires a P

The main goroutine is placed into that P’s run queue

Execution begins

Over time, goroutines are redistributed across P using work stealing and the global queue.

Lifecycle of a Goroutine

Created → Runnable → Running → Blocked → Runnable → Terminated

Created: Goroutine is instantiated

Runnable: Waiting in a run queue

Running: Actively executing

Blocked: Waiting on I/O, syscall, channel, etc.

Terminated: Execution finished

Why the GMP Model Works So Well

The GMP scheduler allows Go to:

Scale efficiently across CPU cores

Avoid excessive OS thread creation

Handle blocking operations gracefully

Maximize CPU utilization

Keep concurrency simple for developers

All while writing code as simple as:

go func() { // concurrent work }()

Final Thoughts

Goroutines may look simple, but the Go scheduler is doing a huge amount of work behind the scenes.

Understanding:

Goroutines (G)

OS threads (M)

Processors (P)

Local and global queues

Work stealing

Blocking behavior

…gives you a clear mental model of how Go concurrency actually works.

Once you understand GMP, Go’s concurrency stops feeling magical and starts feeling predictable, powerful, and elegant.